Ai.stepJP

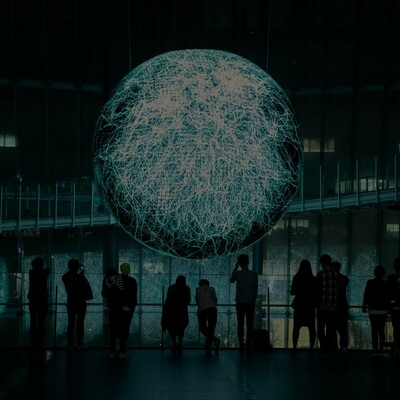

Ai.step is an audiovisual performance unit consisting of new media artists Kakuya Shiraishi and Scott Allen. In their performance Shiraishi and Allen collaborate with an AI algorithm that live codes in the programming language TidalCycles. During the performance the AI continuously generates code that the performers will select or dismiss thus becoming a joint exploration of new sonic territory between human and machine. This combined feed of data emotions jointly generated by the performers and the algorithms constitute the input for an audio reactive visualization system thus completing the audiovisual performance.

Kakuya Shiraishi is a graduate of the Interaction Design Course of Tama Art University. As such he is deeply invested in exploring the nature of interaction between the human performers, machines as collaborators, and audience feedback. Scott Allen is a visual media artist and graduate of leading media art institution IAMAS (Institute of Advanced Media Arts and Sciences). Allen’s take on visuals is based on the concept of Zogaku, the idea of approaching visuals like one would play a musical instrument.

Ai.step is a performance unit consisting of musician Kakuya Shiraishi and visual artist Scott Allen. In their performance Shiraishi and Allen collaborate with an AI algorithm that live codes in the programming language TidalCycles synthesizing unpredictable sonic endeavours that feed into generative data visualization.

Who

Japanese audiovisual unit formed by Kakuya Shiraishi and Scott Allen, combining live coding, data visualization and deep learning.

その他

In their performance Shiraishi and Allen collaborate with an AI algorithm that live codes in the programming language TidalCycles synthesizing new sounds and reactive visuals.